Reflections from SMB 2012 – One

Introduction

The recent Society of Mathematical Biology annual meeting, 25-28 July, in Knoxville TN was an interesting interdisciplinary journey. Attendees had backgrounds in biology, medicine, mathematics, physics, engineering, computer science, ecology, and public health. Coming from my own computational physics background, I’m doing a few reflective posts on what struck me during the conference.

The meeting was hosted by NIMBioS and the University of Tennessee, Knoxville (UTK). NIMBioS has provided a post-meeting overview that includes comments, pictures, and a Storify of meeting tweets. There’s also a compendium of abstracts. I’ve also done my own time-line Storify, searching on both the #smb2012 meeting hashtag and along the time-lines of the principle meeting “tweeters”. The logistics of the conference were great. The UTK conference center was a nice venue and the conference provided both breakfasts and lunches, facilitating interstitial discussions. The Friday evening BBQ and contra dance was also great fun.

Major conference themes included modeling of tumors and spread of disease, and evolution of resistance. The conference schedule often had seven parallel tracks, so my individual reflections are unavoidably incomplete. The talks were run on a strict time schedule, so it was possible to move from one track to another to catch specific papers. This series of write-ups is an expansion of my tweeting at the conference, so inclusion or omission of any particular paper has no significance other than my being there and able to capture key points at the time.

Claire Tomlin

Claire Tomlin opened the conference with her plenary talk, Insights gained from mathematical modeling of HER2 positive breast cancer. Among her stage setting comments was, “We want to use mathematical models to make predictions about aspects of the biology we don’t understand.” This adds to the context from her talk abstract.

In studying biological systems, often only incomplete abstracted hypotheses exist to explain observed complex patterning and functions. The challenge has become to show that enough of a network is understood to explain the behavior of the system. Mathematical modeling must simultaneously characterize the complex and nonintuitive behavior of a network, while revealing deficiencies in the model and suggesting new experimental

directions.

You can learn structure and identify phenotypes by static observations. A stringent test of understanding, however, comes in creating a model that matches the dynamics of the real world, evolving in accord with observations. As I’m writing this, my Twitter stream informs me that it’s Louis Armstrong’s birthday. By synchronicity, the link given matches the idea of capturing correct system dynamics. Putting Tomlin’s concepts into Armstrong’s vernacular, It Don’t Mean a Thing, If It Ain’t Got That Swing.

To drop down into specifics, Claire Tomlin is looking at HER2/HER3 (Human Epidermal growth factor Receptor) system and its recovery following intervention. Part of the key comes in elucidating the signaling network for HER2/HER3. The abstract from Amin et al. (2012), puts the HER2/HER3 signaling system in context.

HER2-amplified tumors are characterized by constitutive signaling via the HER2-HER3 co-receptor complex. While phosphorylation activity is driven entirely by the HER2 kinase, signal volume generated by the complex is under control of HER3 and a large capacity to increase its signaling output accounts for the resiliency of the HER2-HER3 tumor driver and accounts for the limited efficacies of anti-cancer drugs designed to target it. Here we describe deeper insights into the dynamic nature of HER3 signaling. Signaling output by HER3 is under several modes of regulation including transcriptional, post-transcriptional, translational, post-translational, and localizational control. These redundant mechanisms can each increase HER3 signaling output and are engaged in various degrees depending on how the HER3-PI3K-Akt-mTor signaling network is disturbed. The highly dynamic nature of HER3 expression and signaling, and the plurality of downstream elements and redundant mechanisms that function to ensure HER3 signaling throughput identify HER3 as a major signaling hub in HER2-amplified cancers and a highly resourceful guardian of tumorigenic signaling in these tumors.

Consistent with Tomlin’s engineering background, she’s considering control methodology, using different drugs at different times to “steer” the HER3 network to maximize treatment efficacy. Tomlin and Axelrod (2005) do a nice job of describing control theory applied to biology. Tomlin was not the only one mentioning control theory at the conference. It’s become an important enough topic in understanding biological systems to have prompted a book, Feedback Control in Systems Biology. In short, control theory deals with providing input to a system to “steer” it along a desired path or series of states. When a feedback loop is included, deviations from the desired path are detected and additional corrections are made. The trick is to understand response lag and to avoid over-correcting.

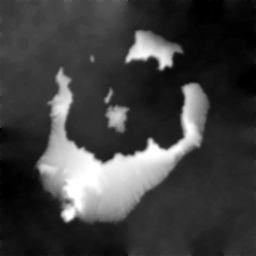

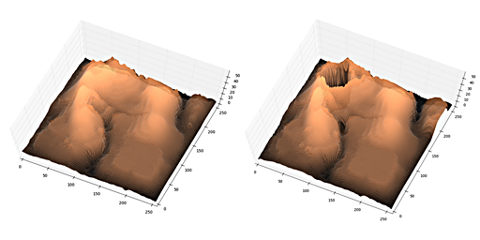

Sometimes before tackling a hard problem, it’s wise to practice with a simpler one. As a step toward working with such signaling systems, Tomlin shifts to looking at a simpler model for drosophila wing hairs. The wing cells know how they are oriented and grow a single wing hair on the lateral side. Chemically disrupting one cell disrupts adjacent cells, indicating transfer of information from one cell to the next. She models (Ma, 2008) concentrations of four core signaling proteins known as Frizzled (Fz), Disheveled (Dsh), Prickle (Pk), and Van Gogh (Vang). As outline in the supplemental material appendix of Ma (2008), a reaction-diffusion system of ten Ordinary Differential Equations (ODEs) is solved for a combination of cells and cell edges.

The ODEs were solved using CVODES. I note this largely because CVODES is now part of SUNDIALS (SUite of Nonlinear and DIfferential/ALgebraic equation Solvers), a project I was once part of while at LLNL.

Transforming Biology Education

One of the sessions I dropped in on was on transforming first-year biology education. This session, convened by Carrie Diaz Eaton (Unity College) drew its motivation from the BIO 2010 report, aimed at transforming biology education to develop 21st century biomedical research skills. In it’s own way, this session was a self-referential exercise in control theory; how to steer biology students through acquiring calculus without triggering activation of latent math phobias.

Erin Bodine (Rhodes College) talked about adding matrix math and Matlab use to biology major courses. Her longer term goal is to launch a biomath major. In her biomath course, Bodine introduces the concepts of feedbacks, derivatives, discrete models, and continuity.

A problems Bodine faced was that her biomath course was not being counted as an elective by either of the math or biology departments. This in-between existence, while unfortunate, is far from unique. There have long been roadblocks to efforts outside of the traditional disciplinary “cell walls”, articles by Austin (2003), Rhoten and Parker (2004), and Paytan and Zoback (2007) being examples. This topic also spawned the NAS/NAE/IOM report Facilitating Interdisciplinary Research (2004).

As I listened to Bodine, I remembered Gil Strang’s (MIT) comments in the Recitation 1 video of his Computational Science & Engineering class.

This is the one and only review, you could say, of linear algebra. I just think linear algebra is very important. You may have got that idea. And my website even has a little essay called Too Much Calculus. Because I think it’s crazy for all the U.S. universities do this pretty much, you get semester after semester in differential calculus, integral calculus, ultimately differential equations. You run out of steam before the good stuff, before you run out of time. And anybody who computes, who’s living in the real world is using linear algebra. You’re taking a differential equation, you’re taking your model, making it discrete and computing with matrices. The world’s digital now, not analog.

Strang also developed a Highlights of Calculus course for high school. Just to fill out the bill, Cornette and Ackerman’s Calculus for Life Sciences is also available online under a Creative Commons license.

Moving onward, Sarah Hews (Hampshire College) teaches Calculus in Context. She remarks that, unlikely many, she is largely free to create a class as she wishes. Part of her approach, in the first couple of weeks is in using research articles to intro biomath concepts. In particular, she uses Mumby et al.’s 2007 letter to Nature, Thresholds and the resilience of Caribbean coral reefs. Hews spoon-feeds this first article to students, using worksheets to guide their reading and get the sense of an ODE.

Timothy Comar (Benedictine University) talked about the transition from biocalculus to undergraduate research — i.e. getting our hands dirty. Comar mentioned getting students to understand stability and bifurcation. He discusses predator/prey modeling with impulses (e.g. spraying pesticide). Comar uses a network model (nodes, weighted edges) to study human spreading of an invasive plant, for example, along railroad lines.

Listening to Comar, I’m reminded of Steven Strogatz’s book and videos on YouTube on nonlinear dynamics and chaos.

So, I’ll stop here for this first post, with more to come shortly in reasonable increments.